If you’re reading this than, you probably searched online and were looking into virtual filesystems and how to merge the contents from multiple hard drives, mount points, or folders into one virtual point.

Why use Unionfs?

In my case the dilemma I was facing was how to combine the contents of multiple remote backup shares and folders for Proxmox vps backups to show in one mount point(directory). This allows clients to see all 7 days of the weekly backups vs me having to attach 7 different ones to show all 7 backup files.

This saves me a lot of time and frustration.

Proxmox backup(

My provider rsync.net it creates 7 daily snapshots by date accessible over the .zsf hidden directory.

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-23/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-24/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-25/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-26/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-27/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-28/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-29/As I have this mounted locally via

This is configured in Proxmox as

So, all these paths are going to be merged so their contents are unified under “/

/mnt/rsyncnet/coby-backups

/mnt/morgan

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-23/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-24/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-25/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-26/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-27/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-28/

/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-29/ If you feel it looks complex it definitely is. I needed to dynamically mount only the available snapshots and which rotate each day with

It would be tedious to maintain and redo

If we we’re going to do this

unionfs-fuse -o cow,max_files=32768 \

-o allow_other,use_ino,suid,dev,nonempty \

/mnt/rsyncnet/coby-backups=RW:/mnt/morgan=RW:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-23/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-24/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-25/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-26/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-27/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-28/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-29/coby-backups=RO \

/sdd/rsyncnet-backups-linkThe order is important as you do it in order/priority and also the structure is as follows /mnt/path=RW: would mount “/mnt/path” as read and write file system so

For example new backups are going to write it to the RW accessible mount point in my case “/mnt/

Installing unionfs -fuse for the system

root@coby:~# apt list --installed | grep unionfs WARNING: apt does not have a stable CLI interface. Use with caution in scripts. unionfs-fuse/oldstable,now 1.0-1+b1 amd64 [installed] root@coby:~#

So to install it we did the below as root/

sudo apt-get -y install unionfs-fuse

Now we need to create the path to the mnt point. In my

mkdir /sdd/rsyncnet-backups-link

Now that it’s installed we can test your command from the above step. Please note the \ and line breaks were necessary so I edited the example I found in

Once entered it should not provide an error. The unionfs mount will then show when doing a “df-h”. Like below very bottom entry:

root@coby:~# df -h Filesystem Size Used Avail Use% Mounted on udev 63G 0 63G 0% /dev tmpfs 13G 1.3G 12G 11% /run rpool/ROOT/pve-1 424G 276G 149G 65% / tmpfs 63G 37M 63G 1% /dev/shm tmpfs 5.0M 0 5.0M 0% /run/lock tmpfs 63G 0 63G 0% /sys/fs/cgroup rpool 149G 0 149G 0% /rpool rpool/ROOT 149G 0 149G 0% /rpool/ROOT rpool/data 149G 0 149G 0% /rpool/data tmpfs 13G 0 13G 0% /run/user/0 /dev/fuse 30M 72K 30M 1% /etc/pve /dev/sdd 916G 80M 870G 1% /sdd user@hostname:/remote/path 708G 131G 577G 19% /mnt/morgan <<<<<<<<<<<< remote sshfs mounts that are also mounted under the unionfs user@hostname: 1.1T 538G 563G 49% /mnt/rsyncnet <<<<<remote sshfs mounts that are also mounted under the unionfs unionfs-fuse 1.1T 539G 565G 49% /sdd/rsyncnet-backups-link <<<< here is our unionfs mount of the 9 directories from above.

You can test this now by cd into the path and it should show the combined contents of all folders, their subfolders and the contents unified. If it worked you’re all set. This can be automated by using

Additional Source References

https://www.linuxjournal.com/article/7714

https://linux.die.net/man/8/unionfs

https://helpmanual.io/help/unionfs/

The next part is for those of you that have dynamically changing paths you need to mount via

See the below script for reference and modify it to suit your needs. I recommend commenting out the mount till you test it by running the script with only the echo commands uncommented to see what paths would be output to ensure your formatting is proper.

#!/bin/bash

# run via "/bin/bash snapshot_folder_detection.sh" as cron after snapshot rotation to remount with the current daily snapshots into one unified folder for Proxmox backups access.

#define base path to check for folders

BasePath=/mnt/rsyncnet/.zfs/snapshot

#Define unified mount point

UnionMountMergePath=/sdd/rsyncnet-backups-link

#Define Snapshot/or dynamically updated folder's base location. In this example we want the paths of all the subdfolders under this path "/mnt/rsyncnet/.zfs/snapshot/" formatting is important.

dirs=("$BasePath"/*/)

#For testing output generated uncomment the below lines to see it detecting your folders. In my example i know its always going to be an array of 7 directories so I have only setup that many. Stuff with dynamic varying amounts would need some custom scripting and loop over the array.

echo "${dirs[0]}"

echo "${dirs[1]}"

echo "${dirs[2]}"

echo "${dirs[3]}"

echo "${dirs[4]}"

echo "${dirs[5]}"

echo "${dirs[6]}"

#next your base command would need to be put here and then copy it once below it and then modify it with the array variables so it merges it like you want it to.

#My base command commented out for example

#unionfs-fuse -o cow,max_files=32768 \

# -o allow_other,use_ino,suid,dev,nonempty \

# /mnt/rsyncnet/coby-backups=RW:/mnt/morgan=RW:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-23/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-24/coby-#backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-25/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-26/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/#daily_2019-08-27/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-28/coby-backups=RO:/mnt/rsyncnet/.zfs/snapshot/daily_2019-08-29/coby-backups=RO \

# $UnionMountMergePath

#My command edited with the array variables spliced in and commented out.

#unionfs-fuse -o cow,max_files=32768 \

# -o allow_other,use_ino,suid,dev,nonempty \

# /mnt/rsyncnet/coby-backups=RW:/mnt/morgan=RW:"${dirs[0]}"coby-backups=RO:"${dirs[1]}"coby-backups=RO:"${dirs[2]}"coby-backups=RO:"${dirs[3]}"coby-#backups=RO:"${dirs[4]}"coby-backups=RO:"${dirs[5]}"coby-backups=RO:"${dirs[6]}"coby-backups=RO \

# $UnionMountMergePathExample:

root@coby:~# bash snapshot_folder_detection.sh /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-23/ /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-24/ /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-25/ /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-26/ /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-27/ /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-28/ /mnt/rsyncnet/.zfs/snapshot/daily_2019-08-29/

Next, you will want to adjust it if you’re not getting the desired effect. Once, that is corrected you can than uncomment or add in your working command and then set it up as a

Results

You can see an example of a merged directory in the below images.

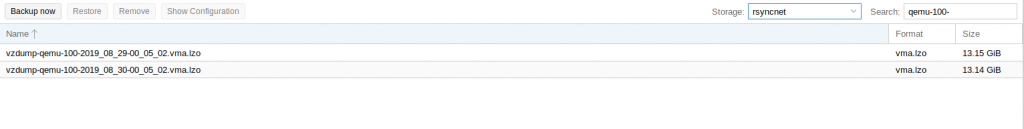

The first image shows what’s on the live backup server read/write under “/mnt/

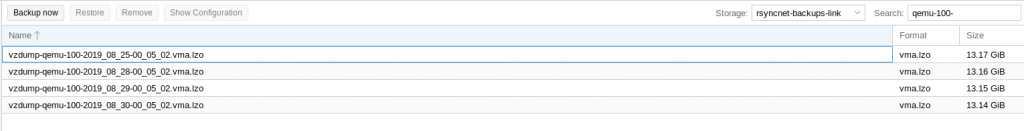

Now, when we select the

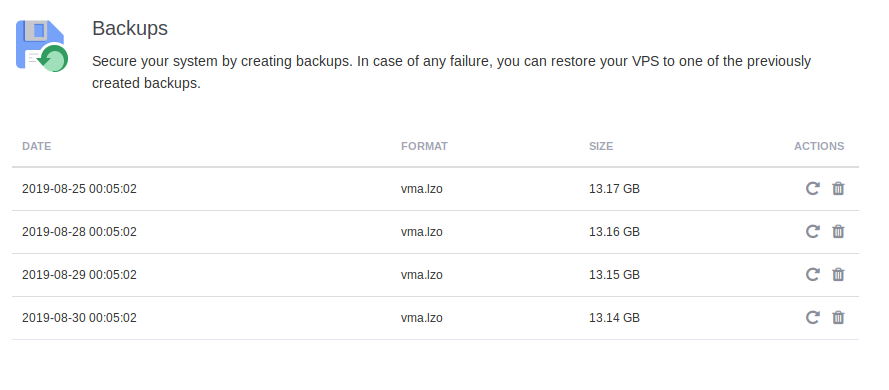

In the client portal due to the

Category:linuxUncategorized